Sign Language Translator: Bridging the Gap Between Deaf and Hearing Communities

In a world where communication connects people, the ability to understand one another is essential. Yet, for millions of people who are deaf or hard of hearing, communication barriers still exist.

Sign language serves as their primary means of expression, but not everyone in society knows it.

This is where sign language translators—both human and digital—play a crucial role. They act as bridges between the hearing and the deaf, turning gestures and signs into spoken or written language and vice versa.

In this article, we’ll explore what sign language translators are, how they work, their importance, modern technological advancements, challenges, and the future of sign language translation technology.

1. What Is a Sign Language Translator?

A sign language translator is a tool, system, or person that interprets sign language into spoken or written language and converts spoken or written language back into sign language.

The purpose is simple: to enable clear communication between people who use sign language and those who don’t.

Traditionally, human sign language interpreters have performed this role. They are professionals trained to understand both sign language and spoken languages, translating conversations in real time.

However, with advances in artificial intelligence (AI), machine learning, and computer vision, digital sign language translators—often in the form of software or apps—have emerged.

Websites and tools like SLTranslator are examples of online platforms that use smart algorithms and visual recognition technology to interpret signs and provide instant translation.

This advancement brings sign language accessibility to everyone, anywhere, and at any time.

2. The Importance of Sign Language Translators

The role of a sign language translator goes far beyond converting gestures to words—it’s about inclusion, equality, and accessibility.

For many deaf and hard-of-hearing individuals, everyday activities that hearing people take for granted—like visiting a doctor, attending a lecture, or participating in a job interview—can be challenging without an interpreter.

Here’s why sign language translators are essential:

a. Equal Access to Communication

Sign language translators ensure that deaf individuals can access information, services, and opportunities on an equal basis.

Whether it’s in classrooms, hospitals, courts, or public events, interpreters ensure no one is left behind.

b. Educational Empowerment

In schools and universities, sign language translators help deaf students fully understand lectures, discussions, and presentations. This enables them to participate actively and achieve academic success.

c. Workplace Inclusion

In professional settings, sign language translators facilitate communication between deaf employees and their colleagues, fostering a more inclusive and productive work environment.

d. Emergency and Public Announcements

During emergencies or public broadcasts, sign language translators (human or digital) help communicate vital information quickly and clearly to those who rely on sign language.

e. Social and Cultural Integration

Beyond professional environments, translators help bridge communication in everyday social interactions, breaking down barriers and promoting unity between deaf and hearing communities.

3. Types of Sign Language Translators

There are primarily two main types of sign language translator:

a. Human Sign Language Translators

These are professional interpreters who undergo extensive training to master both sign and spoken languages.

They are skilled at understanding nuances, emotions, and context, making them highly accurate in real-time translation. Human translators are commonly found in legal, medical, and educational settings.

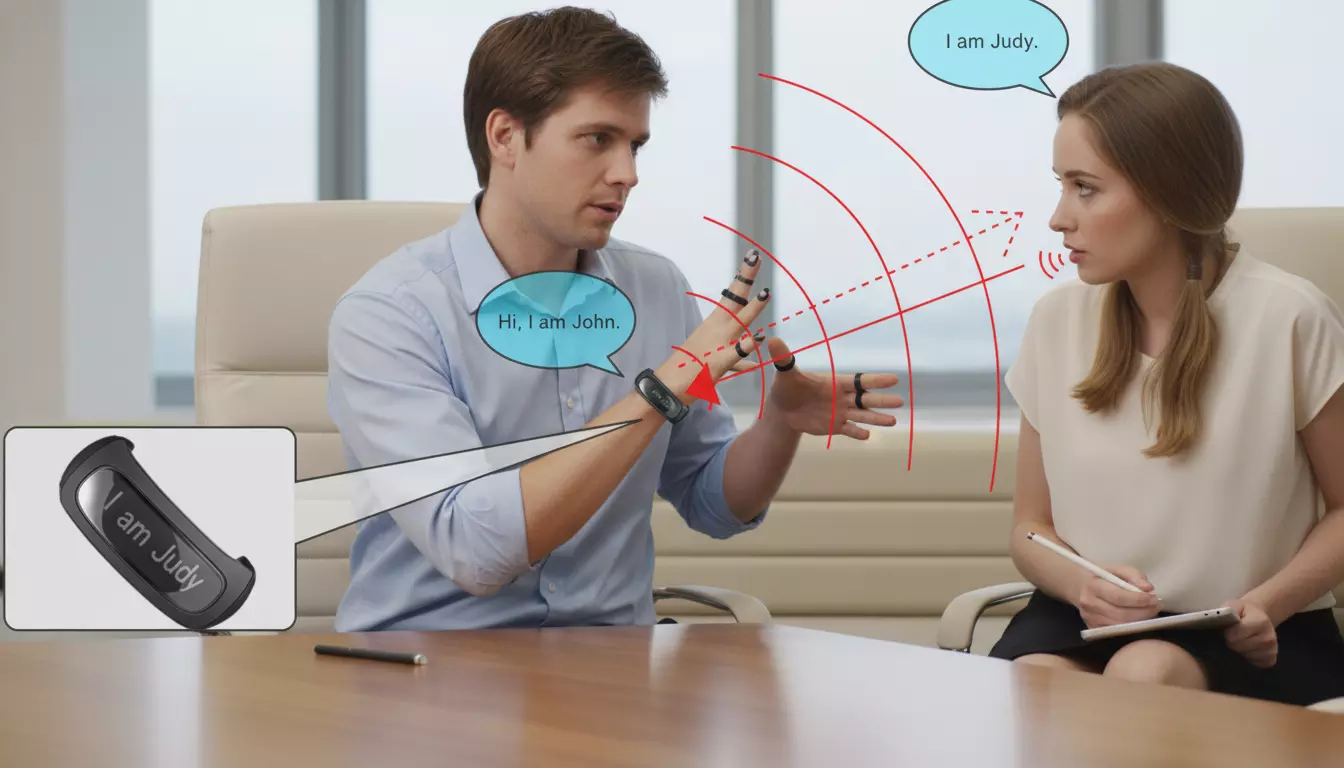

b. Digital Sign Language Translators

Digital translators use AI, machine learning, and computer vision technologies to interpret sign language. They typically operate through:

- Mobile applications

- Web-based platforms (like SLTranslator.com)

- Wearable devices

- Camera-based systems

These digital tools analyze hand movements, facial expressions, and body gestures to interpret meaning and convert them into text or voice output. Similarly, they can also take text or speech and generate corresponding signs using animated avatars or videos.

4. How Do Digital Sign Language Translators Work?

Modern digital translators rely heavily on advanced AI algorithms and machine learning models trained on vast sign language datasets. The general process involves several steps:

Step 1: Gesture Detection

Cameras (such as smartphone or webcam lenses) capture hand and body movements. Specialized software detects and isolates these gestures.

Step 2: Motion Tracking

The system tracks the position, orientation, and sequence of hand movements, facial expressions, and body posture—key components of sign language.

Step 3: Pattern Recognition

Using trained neural networks, the system recognizes the specific sign being made and compares it against its database of known gestures.

Step 4: Language Translation

Once the system identifies the sign, it translates it into the corresponding spoken or written language (e.g., English, Urdu, or Spanish).

Step 5: Output Display

Finally, the translation appears as on-screen text, audio, or a spoken output. Some advanced translators even display animated avatars performing the translated signs for visual learning.

This combination of computer vision, natural language processing (NLP), and speech synthesis creates a powerful and real-time translation experience.

5. Popular Sign Language Translator Tools and Projects

Several innovative tools and research projects have emerged worldwide, aiming to make sign language translation more accessible and accurate.

1. SLTranslator.com

A free and user-friendly online platform, SLTranslator.com allows users to translate sign language gestures into text or speech.

It supports multiple sign languages and is designed for accessibility and ease of use, making it ideal for both learners and daily communication.

2. Google’s Sign Language Projects

Google has been experimenting with AI-based gesture recognition and real-time translation using TensorFlow. Projects like “Sign Language Interpreter” aim to bring real-time sign recognition to Android devices.

3. MotionSavvy UNI

This innovative device uses motion sensors to detect hand movements and translate them into speech. It was one of the first commercial tools designed specifically for deaf users.

4. Hand Talk App

A mobile app that converts speech and text into Brazilian Sign Language (Libras) using a 3D avatar named “Hugo.” It’s popular among educators and deaf communities in Brazil.

5. SignAll

SignAll is a U.S.-based system that uses cameras and AI to translate American Sign Language (ASL) in real time. It’s often used in educational and customer service environments.

6. Challenges in Sign Language Translation

While progress in sign language translation technology is impressive, several challenges remain before achieving perfect real-time translation:

a. Variety of Sign Languages

There isn’t just one universal sign language. There are hundreds worldwide, such as ASL (American Sign Language), BSL (British Sign Language), ISL (Indian Sign Language), and PSL (Pakistani Sign Language).

Each has its own grammar, vocabulary, and regional variations.

b. Complexity of Gestures

Sign language relies not just on hand shapes, but also on facial expressions, body posture, and movement speed. Capturing and interpreting all these elements accurately is technologically complex.

c. Limited Data for Training

AI translators require large datasets of annotated sign language videos to learn effectively. Unfortunately, such datasets are limited, especially for lesser-known sign languages.

d. Real-Time Accuracy

While machines can translate signs quickly, maintaining natural speed and contextual understanding is difficult. Human interpreters still outperform machines in terms of emotional accuracy and nuance.

e. Accessibility and Cost

Although some digital translators are free, advanced systems with wearable sensors or 3D cameras can be expensive and less accessible in developing countries.

7. The Role of AI and Machine Learning

Artificial Intelligence is at the heart of modern sign language translation. Using deep learning techniques, AI models learn patterns from thousands of sign language videos and improve accuracy over time.

Computer vision helps detect gestures, while NLP (Natural Language Processing) helps interpret meaning and grammar.

For instance, AI can distinguish between similar signs by analyzing subtle differences in motion or context.

With continuous training, these systems are becoming more accurate and capable of understanding complex phrases, idioms, and regional variations.

8. The Future of Sign Language Translation

The future looks bright for sign language translation technology. Researchers are working on creating systems that can perform real-time, context-aware translation on smartphones or AR (Augmented Reality) glasses.

Learn sign language alphabet also to learn new sign language.

Here’s what we can expect in the coming years:

a. Integration with Everyday Devices

Sign language translation could soon be built into smartphones, smart TVs, and wearable gadgets, enabling seamless conversations between deaf and hearing users.

b. Multilingual and Cross-Language Support

Future translators may support multiple sign languages and automatically detect which one is being used, offering instant cross-language interpretation.

c. Educational Tools

Enhanced translation tools will make it easier for people to learn sign language interactively, using real-time feedback and visual tutorials.

d. Realistic Avatars and 3D Animations

Virtual avatars will become more lifelike, capable of conveying not just signs but also emotions, tone, and context.

e. Universal Accessibility

As costs decrease and internet accessibility grows, free online translators like SLTranslator.com could reach millions of users worldwide, promoting global inclusivity.

Read: ASL Translator – The Future of Communication Between Deaf

9. How Sign Language Translators Are Changing Lives

The real impact of sign language translators can be seen in the stories of those whose lives they transform.

- In education, deaf students can now attend mainstream schools with the help of digital interpreters.

- In healthcare, doctors can communicate effectively with deaf patients.

- In business, companies can hire deaf employees with confidence that communication will not be a barrier.

- In social settings, families and friends can interact without frustration or miscommunication.

Each successful translation is a step toward an inclusive and understanding society—one where communication is not limited by sound, but expanded by innovation.

10. Conclusion

The invention and advancement of sign language translators—both human and digital—represent one of the most meaningful technological breakthroughs in communication. They embody the values of empathy, inclusion, and equality.

From professional interpreters to AI-powered tools like, these translators are breaking down barriers that have existed for centuries.

While challenges remain—such as improving accuracy, expanding sign language databases, and addressing accessibility—ongoing innovation promises a future where communication between deaf and hearing communities will be seamless and natural.

In the end, sign language translators remind us of one simple truth: language is not just about words—it’s about connection.